A movement that’s meeting the moment.

Impact for us isn’t confined to a number, but to what that number can represent. It’s not 133,500 people employed, it’s what those 133,500 people inspire as they step onto their new beginning. Or not just $2.5 billion in revenue earned, but what that level of market adoption signals for social enterprise. Most of all, it’s what becomes possible when we are all defined by the talent we hold, not the barriers we face. It’s about accelerating a movement that’s meeting the moment.

133.5k

people employed

$2.5B+

revenue earned

Featured Enterprises

When ESEs thrive, society thrives.

When these businesses succeed, doors are opened to stability, economic mobility, and possibility. View our portfolio.

Inspiring Stories

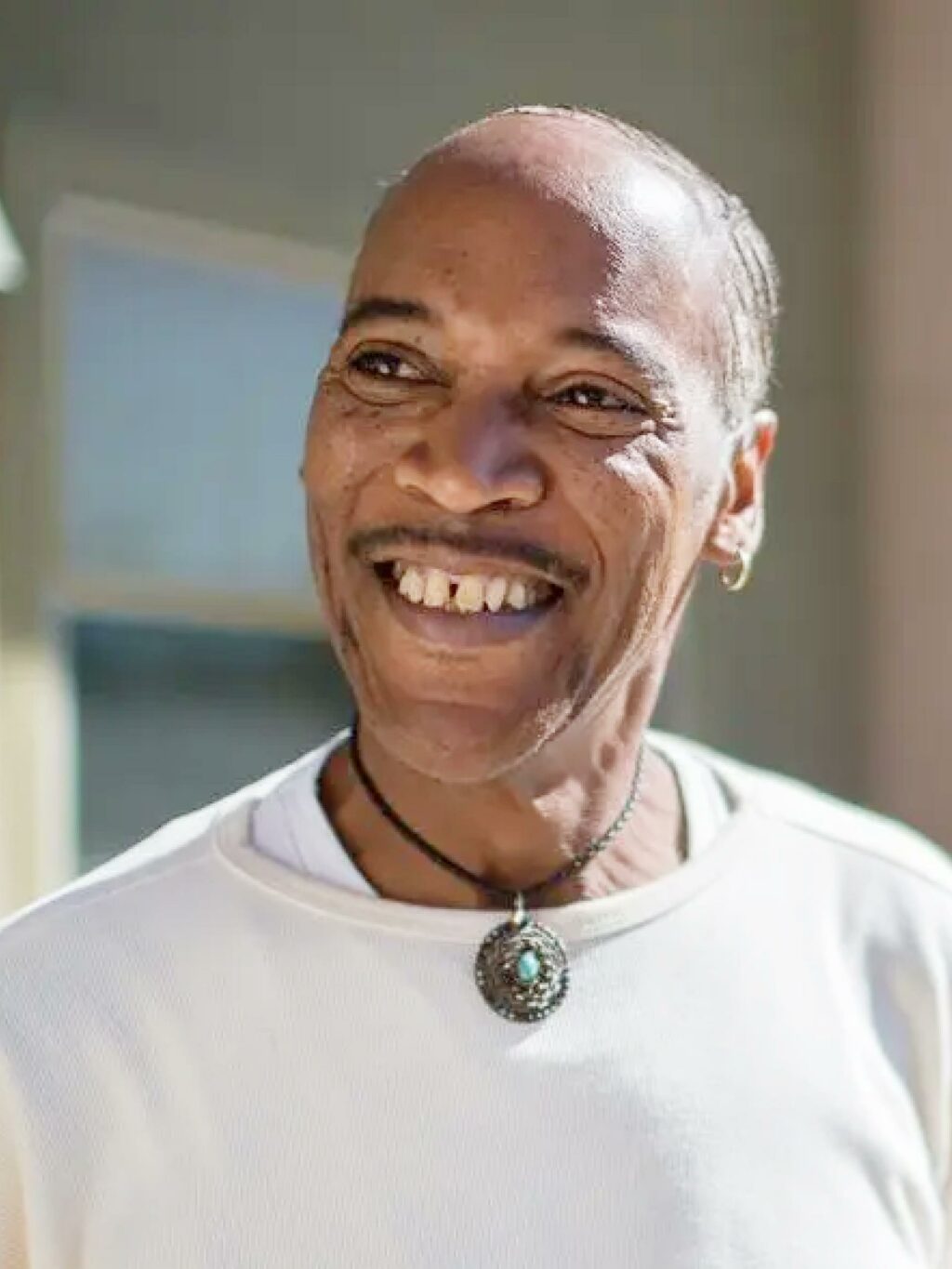

ESEs have helped reveal and reinforce the talent of over 133,500 people around the country. These are just a few of their stories.

Leaning Into Our Learning Edge

The challenges before us don’t stay still, so neither do we. We surface what works, while holding space to learn from what doesn’t — translating both into actionable insights for the field.